I have

recently realized that I have not been posting here for almost precisely two years,

which is an unforgivable pause for a blogger. Hope my readers would forgive me

this. Thinking of what I should write about next, I realized that I have entirely forgotten about an essential thing. The thing, I would probably

say. Which is, it is not only what I want to write about, but also what my

readers may be interested in or even need assistance with.

Having published

my first book last October, I plead guilty to

checking the stats on Amazon, perhaps a bit too often. The good in it is that it also gives me the opportunity to

look for books that would be interesting for myself. This was when I came

across the “Understanding and Using C Pointers: Core Techniques for Memory

Management” by Richard M Reese (the book may be found here

for those interested). The first thought was “why would someone write an entire book on a thing like pointers?” But then, thinking to myself that books are

not written for merely having been

written, I took a glance at the free sample

and took some time to read the comments.

Of course,

the book is not dedicated to pointers only. It is a somewhat detailed explanation what memory means in C and, although I have not had a chance to read the

whole book, I highly recommend it to those of my readers who are interested in

C programming and want to do it the right way.

All this

has reminded me of the fact that the topic of pointers in C is too often a problematic

one for many students. I remember it forcing a few honors pupils in my class in

the college scratch their heads trying to

understand what it is. At the time we were childish and cruel and used to say

that if something is not understood - “the

problem is in the shim between the chair and the screen.” Although correct in many cases,

this statement is not applicable when it comes to pointers (well, at least up

to a certain level).

When it comes to pointers, the problem is mainly the way they are

presented. Students are often given the information, which is utterly redundant

at the time on one hand, and lack a basic understanding of the underlying

architecture. And by the word “architecture” I mean both hardware and software.

Clearly, the problem is the way they, students, are taught. This

thread on Stack Exchange serves a perfect illustration of the fact that

students are shown things in reverse order. Why not start building houses with

the roof then? How is a student supposed to understand pointers if he/she does

not know what the structure of the program is and how it works on lower levels.

Instead, they are told how dangerous and unneeded Assembly is.

However, the idea behind this post is not to argue curricula – there are

many other people smart enough to spoil it properly without the help of others.

Let us concentrate on the technical aspects in an attempt to make the subject

clearer.

Memory

First of all, we need to make it clear to ourselves what the computer memory

is. Just to put it simply – computer memory is an array of cells each capable

of storing a certain amount of information. In our case, talking about Random-Access

Memory, each addressable cell is capable of storing one byte of data. It is

this array of cells that holds all programs being executed at the moment and

data thereof. While memory management is quite an exciting and broad topic by

itself, we will not dive into it as it is merely irrelevant. Two last things

worth mentioning about memory in the context of pointers are that each cell has

an address, and, as we decided not to talk about memory management, we will refer

to memory as the memory of a running process.

Variables

When we declare a variable in our code, regardless of whether it is

Assembly or C code, we just tell the compiler to refer to the specific memory

area by its alias – the name of the variable and that only a particular type of

data could be stored at that area. For example:

int myInteger;

This tells the compiler to name a specific area in memory (it is the

compiler that decides what area it is and we do not care about that at this

point) as myInteger and that we could only store a value of type int there.

From now on we may efficiently use the name of the variable (which is memory

area alias) in our code, and the compiler will know which address to operate

on.

The line above would be equivalent to this statement in Assembly (Flat

Assembler syntax as usual) in case we are declaring a global variable (yes, in

case of Assembly it does matter):

myInteger dd ?

In case of a local variable, however, it would be a bit different:

push ebp

mov ebp, esp

sub esp, 4

virtual at ebp – 4

myInteger dd ?

end virtual

mov ebp, esp

sub esp, 4

virtual at ebp – 4

myInteger dd ?

end virtual

But, in both cases, we are doing the same – telling the compiler to label

specific memory area as myInteger and use it for storage of an integer value.

Sometimes, however, we need to know what the address of a variable is. For

example, if we expect some function to modify the content of a local variable

in addition to returning some value, in which case the variable is out of the scope

of that function.

Pointers

Imagine the following situation – we have an unsigned integer counter and

a function that increments it. The function returns 0 all the time unless an

overflow occurs, meaning the counter has been reset to 0. The prototype would

be something like this:

int increment_counter(unsigned int* counter);

As we see, the “unsigned int* counter” declares a function parameter as a

pointer to an integer. Which, in turn, means that the function is expecting an

address of a variable of type int. The ‘*’ in variable declaration tells the

compiler that the variable is of a special kind, which may be denoted as “a

pointer to a variable of type n” where n is the declaration of data type (e.g.,

int, short, char, etc.). It may simplify it a bit if we replace the word “pointer”

with the word “address.” This way, the declaration of the function parameter

may be interpreted as:

“counter is a pointer to a

variable of type int.”

or, to make it even simpler

“counter is a variable that

may contain an address of a variable of type int.”

Alright, it should be understandable by now. If it is not, please feel

free to use the comments section below for your remarks.

Assigning values to pointers

As we know by now - pointers are a particular type of variables that may

only contain addresses. Therefore, we cannot merely assign them the value we

want (in fact we can, but this is out of the scope for this post). Instead, we

need to tell the compiler to do so. Thus, if we want to call the

increment_counter() function and pass myInteger as a parameter, we do it like

this:

increment_counter(&myInteger);

Where the ‘&’ operator tells the compiler to use the address where the

myInteger is stored rather than the value which is stored there. Therefore, we

may interpret this like:

increment_counter(take the

address of the myInteger variable)

or

increment_counter(take the

address labeled as myInteger).

Which equivalent in Assembly (regardless of whether the variable is local

or global) would be:

lea eax, [myInteger] ; Load EAX register with

the address of myInteger

push eax ; pass it as a function parameter

call increment_counter ; call the function

push eax ; pass it as a function parameter

call increment_counter ; call the function

Although I decided to use a function call as an example here, I cannot

skip direct assignment of pointers. Let’s introduce another variable called

myIntegerPtr which would be a pointer to a variable of type int. Then our

declarations would look like this:

int myInteger;

int* myIntegerPtr;

int* myIntegerPtr;

So, just for the sake of the example, let’s use myIntegerPtr as a parameter

to be passed to the increment_counter() function. In such case, we need to

first assign it the address of myInteger:

myIntegerPtr = &myInteger

Which means:

Take the address of myInteger

(or address labeled as myInteger) and store it in the myIntegerPtr variable.

Retrieving values pointed by pointers

Now that we know what pointers are (and they are just variables storing

addresses), we still need to know how to access the values they are pointing

at. This process is called “dereferencing the pointer.” For the sake of

example, let’s examine the body of the increment_counter() function:

int increment_counter(unsigned

int* counter)

{

return ((*counter)++ == 0xffffffff)? 1 : 0;

}

{

return ((*counter)++ == 0xffffffff)? 1 : 0;

}

The expression *counter means “take the integer value stored

at the address contained in counter.”

Pointer arithmetics

You have definitely noticed the brackets around the *counter, should we

omit them, the expression would result in incrementing the pointer (in fact

incrementing the address) and reading the value from a not intended location,

which may, in certain circumstances, cause the access violation exception.

This brings us to the topic of pointer arithmetics. There is nothing too

much special about it, and, although everything may be put in a single

sentence, let’s still review an example.

Say we have a string

char s[] = “Hello, World!\n”;

And we want to calculate its length (in fact implement a strlen() function

of our own). We, of course, may treat the s as an array of char and check every

member for being 0 by trivially indexing into the array. However, for the sake

of example and in accordance with our topic, we will use a pointer:

char* cp;

So, cp is a pointer to a variable of type char. The first thing we do – we

assign cp with the address of the first char in s:

cp = &s[0];

Of course, we could have written “cp = s,” but we are not going to dive

into arrays here. Besides, the above notation is, in my opinion, a bit more

demonstrative.

The next steps would be:

- Compare the char pointer by cp against 0;

- If equals, then break out of the loop;

- Otherwise increment the pointer: cp++;

- Go to step 1

Still nothing special here, but that is because we were operating on an array

of char, which is one byte (usually). Should we have been dealing with an array

of shorts or integers, we would still write “cp++,” but the actual effect would

differ.

It is essential to understand that adding or subtracting value to/from a

pointer, in fact, means adding or subtracting that value times the size of the

type.

For example, working with a pointer to an integer:

myIntegerPtr – contains the address of myInteger;

myIntegerPtr++ would make it contain the address of myInteger + sizeof(int)

bytes (which, in this case, is 4).

myIntegerPtr += 2 would make it contain the address of myInteger + 2 *

sizeof(int) and so on, and so forth.

Pointer to a pointer

Last thing to mention for today – pointer to a pointer, which merely means

“a variable containing the address of a variable containing the address of a

variable of a certain type.”

We are all familiar with the following construct:

int main(int argc, char** argv)

{

}

{

}

where char** argv means “argv is a pointer to a pointer to a variable of

type char.” And in fact, argv points to an array of pointers to strings

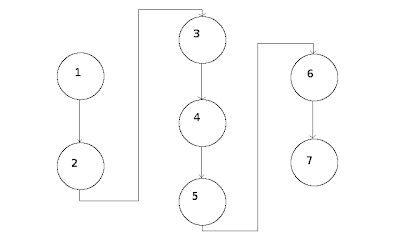

representing command line parameters:

Argv à

Param0 à

Param1 à

…

ParamN à

Dereferencing such constructs is not much harder than dereferencing simple

pointers. For example:

*argv – gives us Param0

**argv – gives us the char pointed by Param0

*(argv + 1) – gives us Param1

*(*(argv + 1)) – gives us the char pointed by Param1

*(*(argv + 1) + 1) – gives us the char at Param1 + 1

You would hardly ever need to use more than two or, in the worst case,

three levels of indirection. If you do, you should probably revise your code.

P.S. This is about as much as needed for understanding what pointers are

and how to use them. Other topics, like, for example, memory management, etc.,

although involve pointers but are entirely different matters.

Hope this post was helpful.